This blog post is outdated, have a look at update to it that can be found

here

Workflow as a function flow powered by completely managed service - Google Cloud Run

Introduction

Workflow as a Function Flow is a powerful concept that allows to build business logic as complete, end to end use case but takes advantage of serverless platforms based on Knative to provide highly scalable and resilient infrastructure.

Workflow as a Function Flow by default communicates directly with Knative Broker to publish events and by that trigger function invocation. This is not possible in managed service such as Google Cloud Run and thus to be able to run with same characteristics use of Google PubSub is introduced. This enforces some additional configuration steps as relying on Cloud Event type attribute as trigger is not enough.

In addition to the type attribute that is the main trigger, a source attribute of a Cloud event is also used. Luckily, Cloud Run with PubSub associates topic information (where the message come from) into the source attribute of the Cloud Event delivered by Cloud Run. This in turn is used by Automatiko to look up proper function to invoke based on the Cloud event attributes (type and source). Due to that, workflow and its nodes that compose the function must be additionally annotated to provide the topic information as a source attribute to filter on. All the details can be found in the Configuration section of this article.

A complete example can be found in this repository

Get started with Google Cloud Run

Have a look at the Google Cloud Run documentation to get started with the offering as such. Once you have environment ready to use you can try it out with Automatiko service running as Function Flow.

Configuration

Dependencies

Configuration of the project is rather simple, once you create a project based on

automatiko-function-flow-archetype there is one additional dependency needed

<dependency>

<groupId>io.automatiko.extras</groupId>

<artifactId>automatiko-gcp-pubsub-sink</artifactId>

</dependency>

This dependency brings in Google PubSub event sink that will publish events for each function invocation during the instance execution. All data will flow through the PubSub topics, thus they must be created in advance.

Topics in PubSub

Each function invocation will use dedicated topic, for that you need to create these topics using either Google Cloud Console or Google Cloud CLI.

Names of the topics are up to you to define though it common to use similar to package names format

e.g. io.automatiko.examples.userRegistration

Link functions with topics - via workflow

Once the topics are defined, they need to be linked to functions. That is done via Custom Attributes

of both process and nodes that will form the functions.

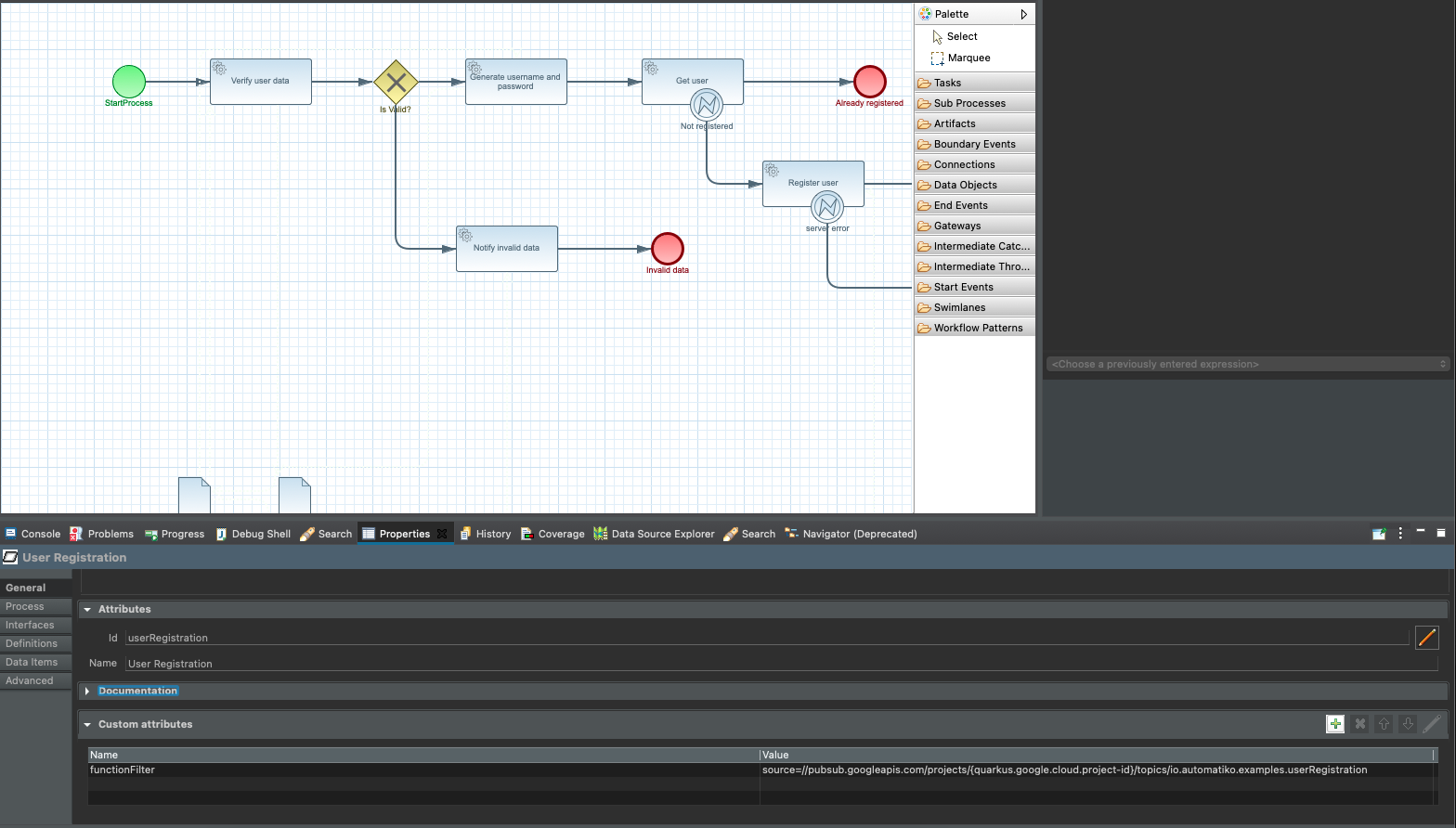

The entry point of the workflow is the first function we need to link to a topic. To do so, add following

custom attribute on the process level (just click on the canvas and open properties panel)

Custom attributes on projcess level with function filter.

Custom attributes on projcess level with function filter.

- Name of the attribute -

functionFilter - Value of the attribute -

source=//pubsub.googleapis.com/projects/{quarkus.google.cloud.project-id}/topics/TOPIC_NAME

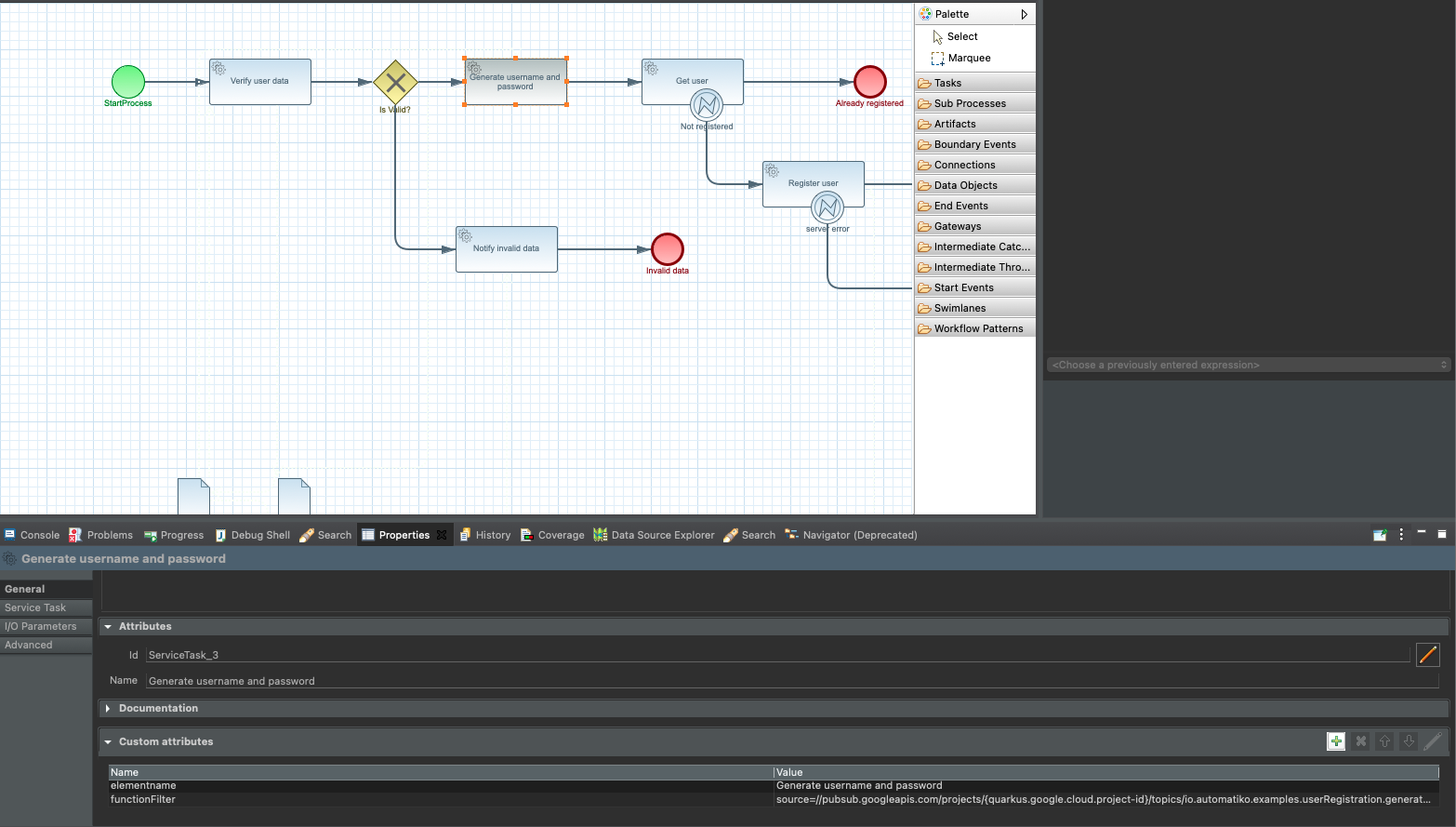

Perform same thing for all functions defined in your workflow (via tasks).

Custom attributes on task level with function filter.

Custom attributes on task level with function filter.

A small explanation is needed when it comes to this functionFilter attribute.

What is defined here is that given function will react to events when they come from a given topic. Where

TOPIC_NAMEmust be replaced with actual name of the topic created{quarkus.google.cloud.project-id}will be automatically replaced during build time based on project id configured

type attribute and that is google.cloud.pubsub.topic.v1.messagePublished. That's why

the extra filtering is required to be able to properly look up functions to invoke for incoming events.

This is the main difference with vanialla Knative setup where access to the broker is allowed and thus the type attribute is the only thing needed.

Project configuration

Project configuration that resides inside src/main/resources/application.properties requires to have following defined

quarkus.automatiko.target-deployment=gcp-pubsub

quarkus.google.cloud.project-id=YOUR_PROJECT_ID

YOUR_PROJECT_ID must be replaced with actual project id that this service will be deployed to.

Build and Deployment

Once the configuration of the service is done and Google Cloud platform account (topics included) it's time to build it and deploy.To build it it's as easy as just running single command.

mvn clean package -Pcontainer-native

NOTE: This command requires to have Docker installed as it will build docker container wiht native image in it.

Once the service is built as docker image you can push it out to container registry. As an example you can follow these two commands to push it to Google Cloud Platform container registry.

docker tag IMAGE gcr.io/GOOGLE_PROJECT_ID/automatiko/user-registration-gcp-cloudrun:latest

docker push gcr.io/GOOGLE_PROJECT_ID/automatiko/user-registration-gcp-cloudrun:latest

Following must be replaced with actual values matching your service and Google Project

- IMAGE - image id of the built container image

- GOOGLE_PROJECT_ID - id if your Google Cloud Platform project

- automatiko/user-registration-gcp-cloudrun - container image name

- latest - container image tag

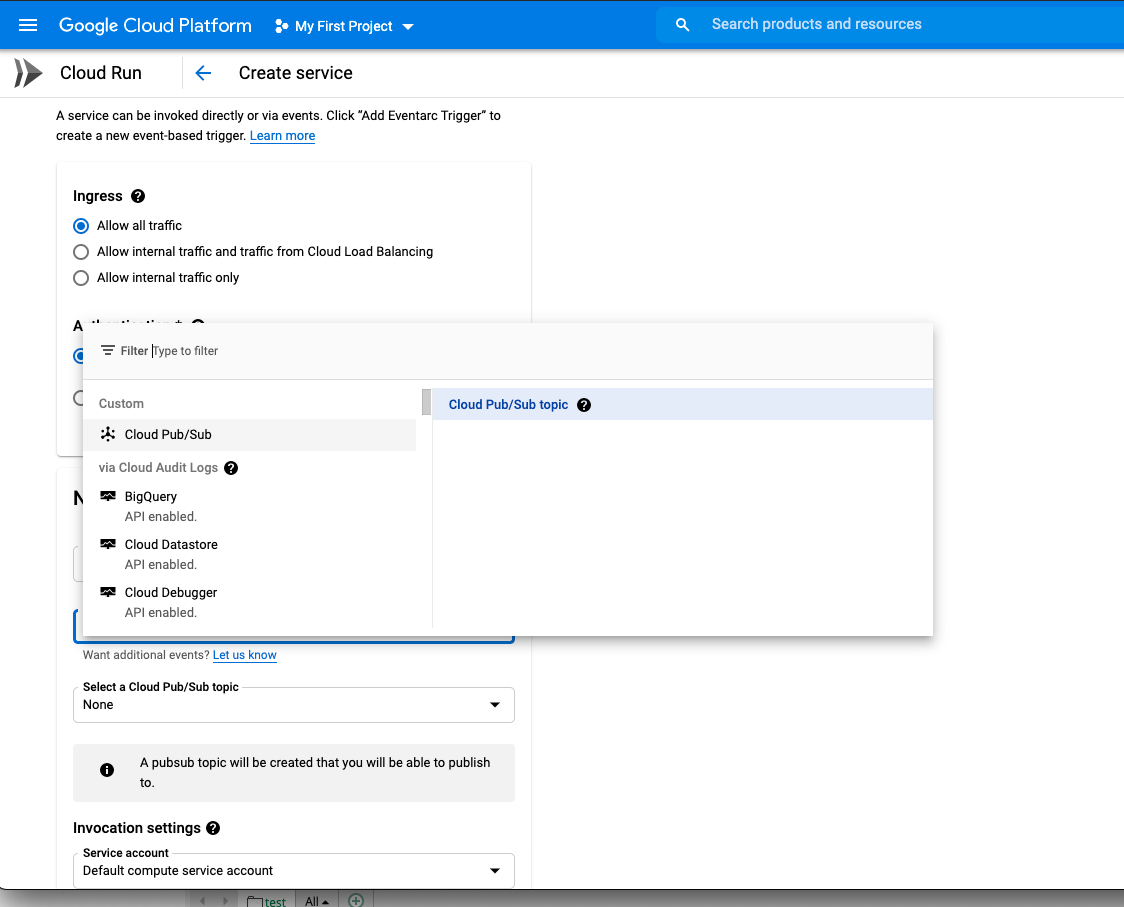

Essentialy it's to follow the wizzard in the Google Cloud Console with the important note to create event triggers for each function. These event triggers are of type

- Cloud Pub/Sub

- Cloud Pub/Sub topic

Create triggers for Cloud Run service.

This should be done for all the topics that are linked to functions in your workflow.

Create triggers for Cloud Run service.

This should be done for all the topics that are linked to functions in your workflow.

Following video shows the steps to deploy to Google Cloud Run in details.

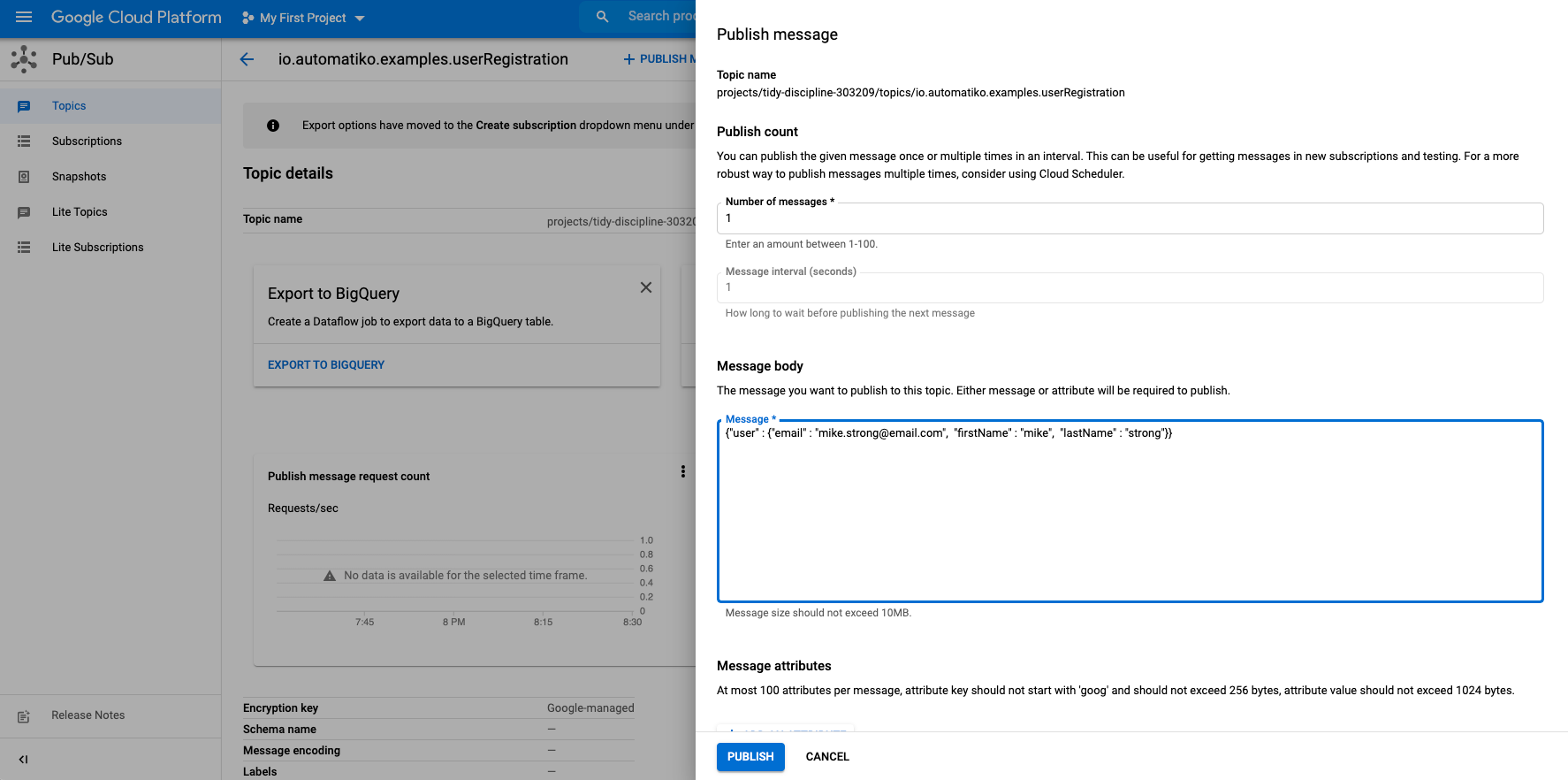

Execution

To start workflow execution (which will execute as function flow) publish new message to the topic that is linked with the workflow. Publish message to start workflow execution.

Publish message to start workflow execution.

Conclusion

This article introduced you to use Automatiko and its Workflow as a Function Flow to execute on Google Cloud Run as a managed Knative environment. At the same time to reduce limitation of having direct access to Knative Broker, it uses Google PubSub as the way of exchanging events to provide efficient and highly scalable infrastructure.

This is just the beginning and number of improvements are in the works such as automatic generation of scripts to create topics and deploy the service with triggers. So stay tuned and let us know (via twitter or mailing list) what would you like to see more in this area.

Photographs by Unsplash.